TL;DR

A randomized controlled trial by METR found experienced open-source developers completed tasks 19% slower with AI tools... while believing they were 24% faster. The 43-percentage-point perception gap means teams cannot self-correct. If your engineers feel more productive but your velocity metrics have not changed, this study explains why.

Part of the AI-Assisted Development Guide ... from code generation to production LLMs.

The Study That Should Worry Every CTO

Most AI productivity claims come from surveys, vendor benchmarks, or anecdotes. The METR study is different.

METR (Model Evaluation and Threat Research) ran a randomized controlled trial... the gold standard in empirical research. Not a survey asking developers how they feel. Not a lab experiment with toy problems. A controlled experiment measuring actual performance on real tasks.

The design: 16 experienced open-source developers working on their own codebases. Not beginners fumbling through unfamiliar repositories. Not junior developers tackling interview-style problems. These were maintainers and significant contributors working on projects they knew intimately... projects they had written, maintained, and debugged for years.

Each developer completed tasks with and without AI tools (Cursor Pro with Claude 3.5 Sonnet), randomized to control for task difficulty and ordering effects. 246 tasks total, covering bug fixes, feature implementations, refactoring, and test writing... the same work these developers do daily.

This is the most rigorous study of AI-assisted development productivity published to date. And its findings contradict almost everything the industry has been telling CTOs about AI tools.

The Numbers

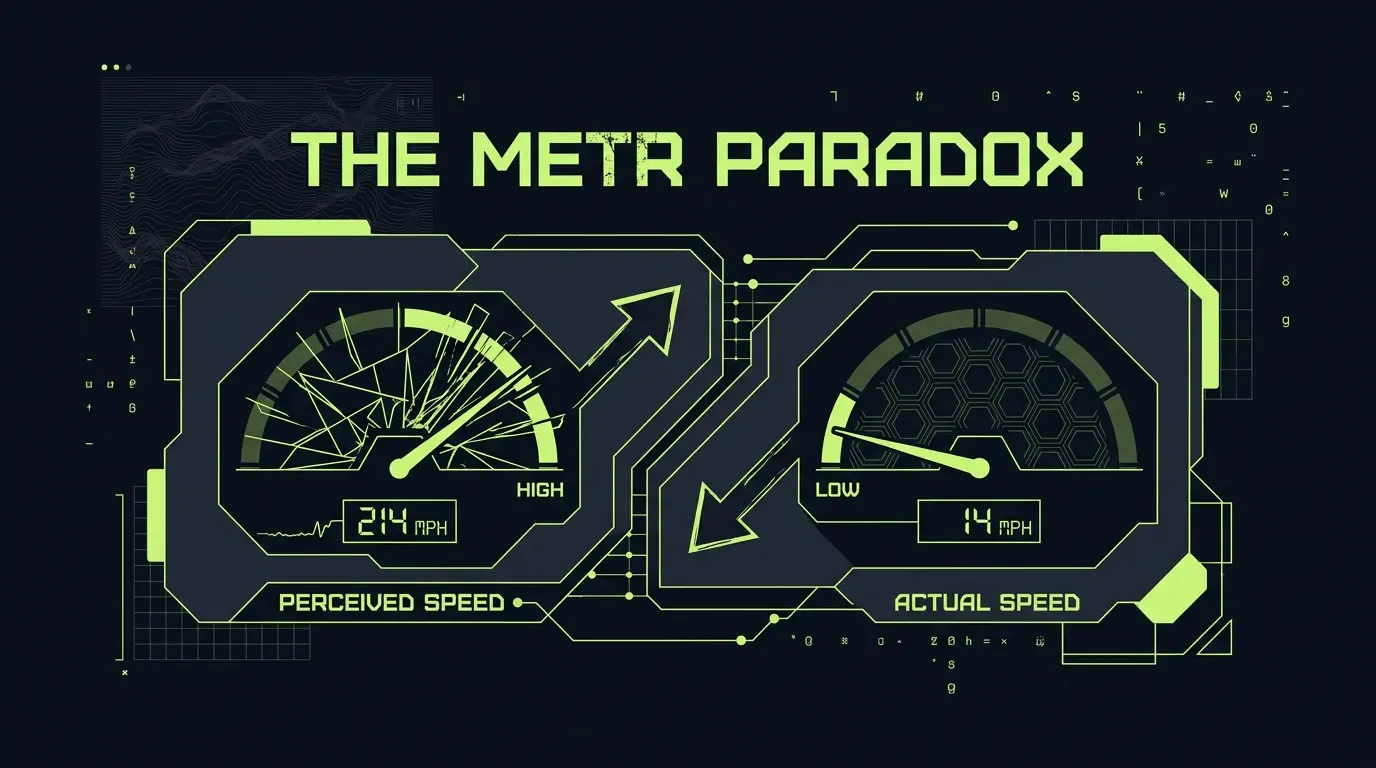

The headline results:

- 19% slower with AI tools (95% confidence interval: 3% to 38% slower)

- Believed 24% faster (developers' self-reported perception)

- 43-percentage-point perception gap between reality and belief

Read that again. Not 19% faster. 19% slower. And the developers themselves had no idea.

What This Means for Sprint Planning

If your team adopted AI tools six months ago and estimated a 20-25% productivity gain based on developer feedback, your capacity model is wrong by 40+ percentage points. You are planning sprints assuming output that does not exist.

The math compounds. A team of 8 engineers at $180K average compensation represents $1.44M in annual engineering cost. If leadership assumed a 20% productivity gain and adjusted hiring plans, roadmap commitments, or headcount accordingly, they made a $288K decision based on a perception that the data contradicts.

What This Means for Headcount

89% of CTOs have already reported production disasters attributed to AI-generated code. If those same leaders are using developer self-reports to justify not backfilling positions or deferring hires, they are compounding a perception error with an organizational one.

The METR data does not say AI tools are useless. It says the feedback loop is broken. Engineers genuinely believe they are faster. They are not lying. They are experiencing a real cognitive distortion... and the organizational consequences are significant.

Why Experience Made It Worse

Here is the counterintuitive finding: METR did not test novices. They tested experts. Developers with deep knowledge of the codebases they worked on. And those experts got slower.

This contradicts the dominant narrative that AI helps most on complex, context-heavy work. The data suggests the opposite: the more you know about a codebase, the more AI tools slow you down.

Three Plausible Explanations

1. Context switching cost. When a developer who already knows the answer stops to formulate a prompt, read the response, evaluate it, and integrate it, they have interrupted their own flow state. For someone who could have written the code in 90 seconds, spending 45 seconds prompting, 30 seconds reading, and 60 seconds verifying is a net loss. The AI interaction inserts a cognitive interruption into a process that did not need one.

2. Verification overhead. An experienced developer reading AI output does not accept it blindly. They check whether it matches the codebase's patterns, handles the edge cases they know about, and does not introduce regressions against the architectural constraints they carry in their head. This verification takes time... and for an expert who would have written correct code the first time, it is pure overhead with zero value added.

3. False starts from hallucinated solutions. AI suggestions that look plausible but miss codebase-specific constraints send developers down investigation paths that would not have existed without the tool. An expert would have avoided those paths instinctively. The AI does not have the instinct, and now the expert spends time evaluating and rejecting suggestions they never needed.

The Expertise Paradox

The developers who benefit most from AI tools may be the ones who need the most help... juniors on unfamiliar codebases, where any suggestion has a reasonable chance of teaching something useful. The developers who benefit least are the ones organizations most want to accelerate... seniors who already know what to do and how to do it.

This creates an uncomfortable strategic question: are AI tools optimizing for the wrong segment of your engineering team?

The Macro Paradox

The METR study is a single data point. But it fits into a broader pattern that should concern anyone making organizational decisions about AI adoption.

The Pragmatic Engineer survey (n=906 developers) found:

- 95% use AI tools weekly

- 56% do 70% or more of their work with AI assistance

- Staff+ engineers lead adoption at 63.5%

AI tools are not being rejected. They are being adopted enthusiastically by exactly the senior engineers who, according to METR, get slower when using them.

The Organizational Productivity Plateau

Despite near-universal adoption, organizational productivity metrics have not moved. The most damning indicator: PR review time increased 91%. AI is generating more code faster, which creates more review burden for the same number of reviewers.

This is not a productivity gain. It is a workload redistribution. The AI shifts effort from writing to reviewing... but reviewing AI code takes longer than reviewing human code because reviewers must verify both correctness and appropriateness without the context of having written it themselves.

GitClear data reinforces this: refactoring declined 60% across codebases with heavy AI adoption. Teams are generating more new code and maintaining less of what already exists. That is not velocity. That is debt accumulation with a productivity label on it.

The CodeRabbit Signal

CodeRabbit's December 2025 analysis found AI-assisted code introduced 1.7x more bugs compared to human-written code. This tracks with the METR findings... if AI tools slow down experienced developers while making them feel faster, the likely mechanism is that subtle errors slip through because the developer's internal quality gate has been partially bypassed by the tool's confidence.

Why We Cannot Feel the Slowdown

The 43-point perception gap is not a mystery. It is a predictable consequence of well-documented cognitive biases interacting with AI tool design.

Automation Bias

Humans systematically over-trust automated systems. This was documented in aviation decades before AI code tools existed. Pilots over-relying on autopilot. Operators ignoring warning signs because the automated monitoring said everything was fine. The pattern is identical: when a tool generates output that looks professional and confident, we assign it more credibility than it deserves.

AI coding tools produce syntactically perfect, well-formatted, confidently presented code. The presentation quality creates an implicit trust signal that has nothing to do with correctness.

The Effort Heuristic

More output feels like more productivity. When AI generates 200 lines of code in 30 seconds, the developer experiences a burst of visible progress. That feels productive regardless of whether those 200 lines required 45 minutes of subsequent debugging, refactoring, or replacement.

The effort heuristic is particularly dangerous with AI tools because the generation step is dramatic and visible (a wall of code appearing instantly), while the correction step is gradual and invisible (scattered micro-edits over the next hour).

Confirmation Bias

Developers remember the wins. The time AI generated a perfect utility function in 5 seconds. The test scaffolding that saved 20 minutes. The regex they would have had to look up. These successes are concrete, memorable, and emotionally satisfying.

The losses are diffuse. The 15 minutes spent debugging a hallucinated API call. The 10 minutes verifying that a suggestion matched the codebase's error-handling pattern. The 5 minutes spent re-reading context that the AI interaction disrupted. These costs spread across the entire session and never crystallize into a memorable "AI slowed me down" moment.

Optimized for Feeling

This is the mechanism that makes self-correction impossible. AI tool UX is optimized for perceived productivity, not measured productivity. Fast code generation, confident presentation, inline suggestions that minimize friction... every design decision makes the tool feel fast, regardless of whether the end-to-end workflow is faster.

In my advisory work, I have seen this play out repeatedly. Engineering managers ask their teams whether AI tools help. The teams say yes, overwhelmingly. The sprint velocity metrics tell a different story... flat or declining. Nobody connects the two because the feedback from the team feels so certain.

When AI Actually Accelerates Work

This is not a Luddite argument. AI tools have genuine acceleration zones. The METR paradox does not mean AI is useless... it means the use cases where AI helps are narrower than the industry claims.

Short, Well-Scoped Tasks

When the "what" is clear and the "why" does not require deep codebase knowledge, AI delivers. Generating a TypeScript interface from a JSON payload. Writing a unit test for a pure function. Converting a callback to async/await syntax.

Boilerplate Generation

Repetitive code where the pattern is established and only the specifics change. API route handlers, form validation schemas, database model definitions, configuration files. The developer knows exactly what the output should look like. AI saves typing, not thinking.

Documentation

Generating JSDoc comments, README sections, API documentation from existing code. AI reads the code and describes it... a task where the "input" (existing code) provides full context and the "output" (descriptions) has low stakes for being slightly imprecise.

Greenfield Exploration

When prototyping a new approach or exploring an unfamiliar library, AI provides a useful first draft to react against. The developer is not slower because they did not have a faster alternative... they would have been reading documentation and writing experimental code either way.

The Pattern

AI excels where context is self-contained in the prompt, where the developer would spend time on mechanics rather than decisions, and where verification is trivial. It struggles... and actively harms productivity... where codebase-specific knowledge, architectural judgment, and deep understanding of edge cases are required.

For experienced developers on mature codebases, the majority of their work falls into the second category. Which is exactly what METR measured.

What to Do With This Data

If you lead an engineering organization, here are five actions grounded in the METR findings.

1. Measure Velocity Before and After AI Adoption With Controls

Do not rely on developer self-reports. Track quantitative metrics: cycle time (commit to deploy), bug escape rate, time-to-resolution for incidents, and rework percentage (code changed within 14 days of merging).

Compare these metrics across a meaningful time window... at least one quarter pre-adoption and one quarter post-adoption. If you adopted AI tools without baseline measurements, start tracking now and compare quarter-over-quarter.

2. Track Time-to-Resolution, Not Time-to-PR

AI tools accelerate the creation of pull requests. They do not accelerate the resolution of problems. If your team is opening more PRs but each one requires more review cycles, more post-merge fixes, and more back-and-forth, your throughput has increased but your productivity has not.

Time-to-resolution... from issue creation to verified deployment... captures the full cost of a change, including the review overhead, the debugging, and the rework that AI-generated code disproportionately requires.

3. Run Your Own Internal Trial With Paired Engineers

The METR methodology is replicable at smaller scale. Take a sprint's worth of tasks. Randomly assign half to be completed with AI tools and half without. Use developers of comparable seniority. Track completion time, defect rate, and code review feedback.

Your codebase is not the same as METR's participants' codebases. Your results may differ. But you will have your own data rather than industry narratives. That data is worth the cost of one sprint.

4. Budget for Verification Overhead

If your team uses AI tools, your review process needs to account for the additional verification burden. AI-generated code requires reviewers to assess both correctness and codebase appropriateness without the context that comes from having written the code themselves.

This means either allocating more reviewer time per PR, implementing automated checks that catch common AI failure modes (architectural violations, missing error handling, unused imports), or both. Do not assume AI saves time upstream and ignore the cost it adds downstream.

5. Do Not Cut Headcount Based on Perceived Productivity Gains

This is the highest-stakes implication of the METR study. If your board or investors are asking why you need the same number of engineers when everyone is using AI, the answer is in the data: because the perceived gains are not real, and cutting headcount based on perception will reduce actual capacity.

The 43-point perception gap means your engineers' honest assessment of their own productivity is wrong by almost half. Building organizational strategy on that assessment is building on sand.

The Counter-Argument

The strongest objection to the METR study is the sample size. n=16 is small. 246 tasks across 16 developers provides useful signal but cannot claim statistical certainty for every subgroup and task type. The confidence interval is wide (3% to 38% slower), which means the true effect could be modest.

This is a legitimate criticism. But three things make the METR findings more credible than the sample size alone would suggest.

First, the methodology is the most rigorous in the field. Randomized controlled trial. Within-subject design (same developer, with and without AI). Tasks on real codebases, not synthetic benchmarks. Pre-registered analysis plan. This is not a Twitter poll or a vendor white paper.

Second, the direction is consistent with other evidence. CodeRabbit found 1.7x more bugs in AI code. GitClear found a 60% decline in refactoring. The Pragmatic Engineer survey found a 91% increase in PR review time. Organizational productivity metrics have not moved despite near-universal adoption. METR's finding... that AI tools slow down experienced developers while creating a perception of speed... is the mechanism that explains all of these other observations.

Third, the perception gap has independent significance. Even if the true slowdown is only 3% (the lower bound of the confidence interval), a 3% slowdown combined with a 24% perception of speedup is still a 27-point perception gap. That gap alone is enough to cause bad organizational decisions about hiring, capacity, and roadmap commitments.

The burden of proof has shifted. If AI tool vendors want to claim productivity gains for experienced developers on mature codebases, they need evidence at least as rigorous as METR's study showing otherwise. Survey data and GitHub's own benchmarks... where the vendor measures the impact of its own product... do not clear that bar.

The Uncomfortable Conclusion

The AI productivity narrative is built on two foundations: vendor benchmarks and developer self-reports. METR undermined both.

Vendor benchmarks measure performance on toy tasks... HumanEval, SWE-bench, isolated coding challenges... that do not resemble real development work on mature codebases. Developer self-reports are distorted by the cognitive biases that AI tool design amplifies.

What remains when you strip those away is a technology that accelerates boilerplate, assists with exploration, and helps with documentation... but slows down the core work of experienced developers on complex codebases while making them believe the opposite.

That does not mean AI tools should be abandoned. It means they should be adopted with the same rigor applied to any other engineering decision: measure the outcome, do not trust the feeling, and build organizational strategy on data rather than perception.

In my advisory work, I now recommend that every AI tool adoption include a measurement protocol. Not a survey. Not a retrospective. An actual measurement of cycle time, defect rate, and rework percentage... with a pre-adoption baseline.

The teams that do this will discover where AI genuinely helps their specific codebase and workflow. The teams that skip it will keep believing they are 24% faster while falling 19% behind.

Frequently Asked Questions

Does the METR study mean AI coding tools are useless?

No. The study found a 19% slowdown for experienced developers on their own codebases... a specific population doing a specific kind of work. AI tools likely provide genuine acceleration for junior developers on unfamiliar codebases, for boilerplate-heavy tasks, and for greenfield exploration. The finding is that the use cases where AI helps are narrower than the industry claims, and that developer self-reports are unreliable indicators of actual impact.

Is n=16 enough to draw conclusions?

It is enough to take seriously. The within-subject design (each developer serves as their own control) strengthens the statistical power, and 246 tasks provide meaningful signal. The 95% confidence interval (3% to 38% slower) tells us the effect is almost certainly negative... the uncertainty is about magnitude, not direction. Combined with consistent signals from CodeRabbit (1.7x bugs), GitClear (60% refactoring decline), and organizational productivity plateaus, the weight of evidence is substantial.

Why did developers perceive themselves as faster when they were slower?

Three cognitive biases interact: automation bias (over-trusting confident-looking automated output), the effort heuristic (visible code generation feels productive even when total time increases), and confirmation bias (memorable AI wins overshadow diffuse AI costs). AI tools are also designed to feel fast... instant generation, inline suggestions, minimal friction... which amplifies these biases. The perception gap is a predictable consequence of human cognition interacting with optimized tool UX.

Should I stop my team from using AI tools?

Not necessarily. But you should stop assuming AI tools make your team faster without measuring it. Implement quantitative tracking (cycle time, defect rate, rework percentage) with a pre-adoption baseline, run your own controlled experiment on a sprint's worth of tasks, and build your capacity plan on data rather than developer self-reports. The goal is informed adoption, not blanket acceptance or rejection.

How does this relate to the 89% of CTOs reporting production disasters from AI code?

The METR perception gap is the mechanism. If developers believe they are 24% faster when they are 19% slower, their internal quality gates are miscalibrated. They spend less time on verification because the tool's confident output creates a false sense of correctness. The result: more code ships faster with less scrutiny, and production defects increase. The 89% disaster rate and the 43-point perception gap are two sides of the same coin.

Making AI adoption decisions for your engineering team? I help CTOs build measurement-driven AI strategies that separate perception from reality.

- Technical Advisor for Startups ... Data-driven AI governance

- AI Integration for SaaS ... Responsible AI implementation

- Fractional CTO Services ... Engineering leadership on demand

Continue Reading

This post is part of the AI-Assisted Development Guide ... covering code generation, LLM architecture, prompt engineering, and cost optimization.

More in This Series

- AI-Assisted Development Guide ... The comprehensive framework

- The Generative Debt Crisis ... When AI code becomes liability

- AI Code Review ... Catching what LLMs miss

- Stop Calling It Vibe Coding ... What AI-assisted development actually requires

Evaluating AI's impact on your engineering team? Work with me on building a measurement-driven adoption strategy.