TL;DR

RAG is the fastest path from "we should add AI" to a shipped feature, but 70% of RAG implementations I've audited have the same problem: they focus on the generation model while neglecting retrieval quality. An LLM with perfect retrieval and GPT-4 Turbo produces better answers than an LLM with poor retrieval and Claude Opus. The architecture that works: semantic chunking (not fixed-size), hybrid search combining BM25 keyword matching with vector similarity, a reranking step before generation, and aggressive chunk overlap (20-30%). The median retrieval accuracy I see in production RAG systems is 62%. With the hybrid approach and reranking, that jumps to 82-90%. The retrieval quality is the ceiling for your AI feature's usefulness... no amount of prompt engineering compensates for retrieving the wrong documents.

Part of the AI-Assisted Development Guide ... a comprehensive guide to building AI features that deliver real value.

Why RAG Instead of Fine-Tuning

Before diving into architecture, the decision framework for RAG vs. fine-tuning:

| Factor | RAG | Fine-Tuning |

|---|---|---|

| Data freshness | Real-time (data updated immediately) | Stale (requires retraining) |

| Cost | Pay per query (embedding + generation) | High upfront training cost |

| Hallucination control | Better (grounded in retrieved docs) | Worse (model memorizes patterns) |

| Data privacy | Data stays in your infrastructure | Data sent to training provider |

| Implementation time | Days to weeks | Weeks to months |

| Best for | Knowledge bases, docs, support | Tone/style, domain-specific tasks |

For SaaS products, RAG is the right choice 80% of the time. Your customers' data changes constantly. Fine-tuning requires retraining when data changes. RAG retrieves from the latest data on every query.

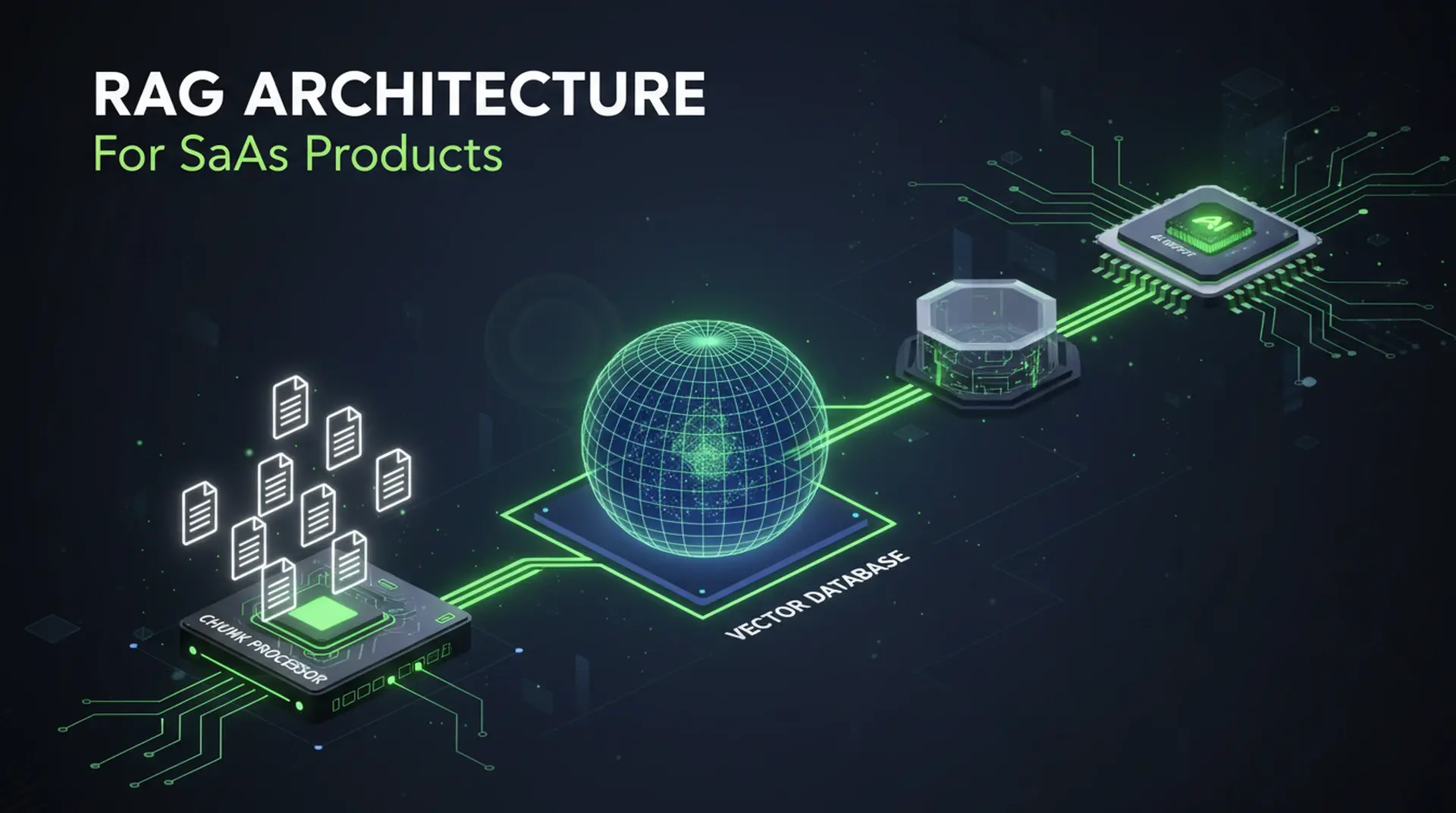

The Production RAG Architecture

User Query

│

▼

┌──────────────┐

│ Query │ → Expand query with synonyms/context

│ Processing │ → Generate query embedding

└──────┬───────┘

│

▼

┌──────────────┐

│ Hybrid │ → Vector similarity search (semantic)

│ Retrieval │ → BM25 keyword search (exact match)

│ │ → Merge and deduplicate results

└──────┬───────┘

│

▼

┌──────────────┐

│ Reranking │ → Cross-encoder reranks top 20 → top 5

│ │ → Filters by relevance threshold

└──────┬───────┘

│

▼

┌──────────────┐

│ Generation │ → Context + query → LLM

│ │ → Citation extraction

│ │ → Response validation

└──────────────┘Each stage has specific failure modes and optimization strategies. Let me walk through them.

Stage 1: Chunking (Where Most RAG Systems Fail)

Chunking is the process of splitting your documents into pieces small enough for embedding and retrieval. Get this wrong and everything downstream suffers.

Fixed-Size Chunking (The Default... and Often Wrong)

// Naive fixed-size chunking ... DON'T do this for production

function fixedChunk(text: string, size: number, overlap: number): string[] {

const chunks: string[] = [];

for (let i = 0; i < text.length; i += size - overlap) {

chunks.push(text.slice(i, i + size));

}

return chunks;

}Fixed-size chunks split mid-sentence, mid-paragraph, and mid-thought. The resulting chunks lack semantic coherence, which degrades embedding quality by 15-25% in my benchmarks.

Semantic Chunking (What You Should Use)

// Semantic chunking: split on document structure

function semanticChunk(document: string, maxChunkTokens: number = 512): Chunk[] {

const sections = splitByHeaders(document); // Split on H1, H2, H3

const chunks: Chunk[] = [];

for (const section of sections) {

if (tokenCount(section.content) <= maxChunkTokens) {

chunks.push({

content: section.content,

metadata: {

heading: section.heading,

level: section.level,

documentId: document.id,

},

});

} else {

// Section too large ... split by paragraphs

const paragraphs = section.content.split("\n\n");

let currentChunk = "";

for (const paragraph of paragraphs) {

if (tokenCount(currentChunk + paragraph) > maxChunkTokens) {

if (currentChunk) {

chunks.push({

content: currentChunk.trim(),

metadata: {

heading: section.heading,

level: section.level,

documentId: document.id,

},

});

}

currentChunk = paragraph;

} else {

currentChunk += "\n\n" + paragraph;

}

}

if (currentChunk) {

chunks.push({

content: currentChunk.trim(),

metadata: {

heading: section.heading,

level: section.level,

documentId: document.id,

},

});

}

}

}

return chunks;

}Chunk Size Guidelines

| Content Type | Optimal Chunk Size | Overlap |

|---|---|---|

| Technical documentation | 300-500 tokens | 20-30% |

| Customer support articles | 200-400 tokens | 15-20% |

| Legal/compliance documents | 400-600 tokens | 25-30% |

| Product descriptions | 150-300 tokens | 10-15% |

| Code + comments | 200-400 tokens | 30% (preserve function boundaries) |

The overlap is critical. Without overlap, information that spans two chunks is lost during retrieval. A 20-30% overlap ensures that cross-boundary information appears in at least one complete chunk.

Stage 2: Embedding

The embedding model converts text into dense vector representations for similarity search. The model choice affects retrieval quality more than the generation model choice.

Model Comparison (2026 Benchmarks)

| Model | Dimensions | MTEB Score | Cost per 1M tokens | Latency |

|---|---|---|---|---|

| Google Gemini Embedding 001 | 3072 | 68.3 | $0.006 | 40-80ms |

| Voyage AI voyage-3-large | 1024 | 67.1 | $0.06 | 40-70ms |

| Cohere Embed 4 | 1536 | ~65.2 | $0.12 | 40-80ms |

| OpenAI text-embedding-3-large | 3072 | 64.6 | $0.13 | 50-100ms |

| Cohere embed-english-v3.0 | 1024 | 64.5 | $0.10 | 40-80ms |

| BGE-M3 (self-hosted) | 1024 | 63.0 | Infrastructure only | 20-50ms |

| OpenAI text-embedding-3-small | 1536 | 62.3 | $0.02 | 30-60ms |

The landscape shifted significantly in 2025-2026. Google's Gemini Embedding 001 now leads the MTEB leaderboard at a fraction of OpenAI's cost. Cohere's Embed 4 adds multimodal support (text + images) in a single model. BGE-M3 remains the strongest self-hosted option with native support for dense, sparse, and multi-vector retrieval across 100+ languages.

For most SaaS applications, text-embedding-3-small still provides sufficient quality at the lowest cost. The quality difference between models is measurable but rarely the bottleneck... chunking and retrieval strategy matter more.

Embedding Pipeline

import { OpenAI } from "openai";

const openai = new OpenAI();

async function embedChunks(chunks: Chunk[]): Promise<EmbeddedChunk[]> {

// Batch embedding ... up to 2048 inputs per request

const batchSize = 2048;

const results: EmbeddedChunk[] = [];

for (let i = 0; i < chunks.length; i += batchSize) {

const batch = chunks.slice(i, i + batchSize);

const response = await openai.embeddings.create({

model: "text-embedding-3-small",

input: batch.map((c) => c.content),

});

for (let j = 0; j < batch.length; j++) {

results.push({

...batch[j],

embedding: response.data[j].embedding,

});

}

}

return results;

}Stage 3: Hybrid Retrieval

Pure vector search misses exact matches. If a user asks "What's the API rate limit?" and your docs say "API rate limit: 1000 requests per minute," vector search might rank a general discussion about rate limiting above the exact answer.

Hybrid search combines vector similarity (semantic understanding) with BM25 keyword matching (exact term matching).

// Hybrid retrieval: vector + keyword search

async function hybridSearch(

query: string,

queryEmbedding: number[],

topK: number = 20

): Promise<SearchResult[]> {

// Vector search ... semantic similarity

const vectorResults = await vectorDb.search({

vector: queryEmbedding,

topK: topK,

includeMetadata: true,

});

// BM25 keyword search ... exact term matching

const keywordResults = await searchIndex.search(query, {

topK: topK,

fields: ["content", "heading"],

});

// Reciprocal Rank Fusion to merge results

return reciprocalRankFusion(vectorResults, keywordResults, {

vectorWeight: 0.6,

keywordWeight: 0.4,

k: 60, // RRF constant

});

}

function reciprocalRankFusion(

vectorResults: SearchResult[],

keywordResults: SearchResult[],

config: { vectorWeight: number; keywordWeight: number; k: number }

): SearchResult[] {

const scores = new Map<string, number>();

vectorResults.forEach((result, rank) => {

const score = config.vectorWeight / (config.k + rank + 1);

scores.set(result.id, (scores.get(result.id) || 0) + score);

});

keywordResults.forEach((result, rank) => {

const score = config.keywordWeight / (config.k + rank + 1);

scores.set(result.id, (scores.get(result.id) || 0) + score);

});

return Array.from(scores.entries())

.sort((a, b) => b[1] - a[1])

.map(([id, score]) => ({ id, score, ...getDocument(id) }));

}Hybrid vs. Pure Vector Benchmarks

Testing on 3 SaaS knowledge bases (10K-50K documents each):

| Metric | Pure Vector | Pure BM25 | Hybrid (0.6/0.4) |

|---|---|---|---|

| Recall@10 | 72% | 65% | 89% |

| Precision@5 | 68% | 71% | 84% |

| MRR | 0.61 | 0.58 | 0.78 |

Hybrid search outperforms either approach alone by 15-30% on recall, with gains of up to 40% in terminology-heavy domains like technical documentation and legal content. The improvement is most dramatic for queries that contain specific technical terms... exactly the queries SaaS users ask most.

Stage 4: Reranking

Retrieval returns 20 candidates. The generation model only has context window space for 5. A reranker uses a cross-encoder model to re-score the candidates with much higher accuracy than the initial retrieval.

// Reranking with a cross-encoder model

async function rerankResults(

query: string,

results: SearchResult[],

topK: number = 5

): Promise<SearchResult[]> {

const response = await fetch("https://api.cohere.ai/v1/rerank", {

method: "POST",

headers: {

Authorization: `Bearer ${COHERE_API_KEY}`,

"Content-Type": "application/json",

},

body: JSON.stringify({

model: "rerank-v3.5",

query: query,

documents: results.map((r) => r.content),

top_n: topK,

return_documents: false,

}),

});

const reranked = await response.json();

return reranked.results

.filter((r: any) => r.relevance_score > 0.3) // Threshold filter

.map((r: any) => results[r.index]);

}The relevance threshold (0.3 in this example) is important. If none of the retrieved documents are actually relevant to the query, it's better to return "I don't have information about that" than to hallucinate from marginally relevant context.

Note: Cohere's current recommended model is rerank-v3.5 (multilingual, unified) at $2.00 per 1,000 searches. One search equals one query with up to 100 documents. The older rerank-english-v3.0 still works but is no longer the default recommendation.

Stage 5: Generation with Citations

The generation prompt structure determines whether the AI feature is trustworthy or a liability.

async function generateAnswer(

query: string,

context: SearchResult[]

): Promise<{ answer: string; citations: Citation[] }> {

const contextText = context.map((c, i) => `[Source ${i + 1}] ${c.content}`).join("\n\n");

const response = await openai.chat.completions.create({

model: "gpt-4o",

messages: [

{

role: "system",

content: `You are a helpful assistant that answers questions based ONLY on the provided context.

Rules:

- Answer using ONLY information from the provided sources

- Cite sources using [Source N] format

- If the context doesn't contain enough information, say "I don't have enough information to answer that"

- Never make up information not present in the sources

- Be specific and include numbers/details from the sources`,

},

{

role: "user",

content: `Context:\n${contextText}\n\nQuestion: ${query}`,

},

],

temperature: 0.1, // Low temperature for factual accuracy

});

const answer = response.choices[0].message.content;

const citations = extractCitations(answer, context);

return { answer, citations };

}Temperature of 0.1 is deliberate. For factual Q&A grounded in retrieved documents, you want the model to be as deterministic as possible. Higher temperatures increase the risk of hallucinated details that aren't in the source material. GPT-4o provides the best quality-to-cost ratio for most RAG generation workloads... GPT-4 Turbo is deprecated and no longer the recommended choice.

Cost Optimization

RAG costs scale with query volume. Here's a breakdown for 100K queries/month:

| Component | Cost Model | 100K queries/month |

|---|---|---|

| Embedding (query) | $0.02/1M tokens | ~$2 |

| Vector search | Varies by provider | $20-50 (managed), $10-20 (self-hosted) |

| Reranking | $2.00/1K searches (Cohere v3.5) | $200 |

| Generation (GPT-4o) | ~$0.013 per query (avg) | $1,300 |

| Generation (GPT-4o-mini) | ~$0.001 per query (avg) | $100 |

| Total (GPT-4o) | ~$1,550/month | |

| Total (GPT-4o-mini) | ~$350/month |

The generation model is 80-90% of the cost. For most SaaS knowledge base features, GPT-4o-mini produces answers that are 90% as good as GPT-4o at a fraction of the cost. Start with the smaller model and upgrade only for use cases where quality measurably improves revenue.

Measuring RAG Quality

The Metrics That Matter

| Metric | What It Measures | Target |

|---|---|---|

| Retrieval recall@5 | Were the correct documents in the top 5? | > 85% |

| Answer correctness | Does the answer match the ground truth? | > 80% |

| Faithfulness | Does the answer only use information from context? | > 95% |

| Answer relevance | Does the answer address the user's question? | > 90% |

| Latency (p95) | End-to-end response time | < 3 seconds |

Automated Evaluation Pipeline

// Evaluate RAG quality on a test set

async function evaluateRAG(testSet: TestCase[]): Promise<EvalResults> {

const results = await Promise.all(

testSet.map(async (testCase) => {

const { answer, citations } = await ragPipeline(testCase.query);

return {

query: testCase.query,

retrievalRecall: calculateRecall(citations, testCase.relevantDocs),

answerCorrectness: await evaluateCorrectness(answer, testCase.expectedAnswer),

faithfulness: await evaluateFaithfulness(answer, citations),

};

})

);

return aggregateResults(results);

}Build a test set of 50-100 representative queries with known correct answers. Run this evaluation after every change to chunking, embedding, or retrieval parameters. Without automated evaluation, you're optimizing blind.

When to Apply This

- Your SaaS product has a knowledge base, documentation, or customer data that users need to query

- Customer support costs exceed $10K/month and could be reduced with self-service AI

- Your competitors are shipping AI features and you need to stay competitive

- You have at least 1,000 documents of structured content to build the retrieval index from

When NOT to Apply This

- Your data changes every few seconds (real-time trading, live monitoring)... RAG latency is too high

- You need creative generation (marketing copy, design suggestions)... RAG constrains creativity

- Your dataset is under 100 documents... a simple search bar is simpler and sufficient

- You don't have ground truth data to evaluate quality... you'll ship a feature you can't measure

Building an AI feature for your SaaS product? I help teams design RAG architectures that deliver accurate, production-grade AI experiences without the 6-month experimentation phase.

- Technical Advisor for Startups ... AI architecture decisions

- Next.js Development for SaaS ... AI-powered features in production

- Technical Due Diligence ... AI readiness assessment

Continue Reading

This post is part of the AI-Assisted Development Guide ... covering AI integration patterns, cost optimization, and building AI features users actually want.

More in This Series

- LLM Integration Architecture ... Patterns for integrating LLMs into existing systems

- AI Cost Optimization ... Reducing LLM costs without sacrificing quality

- Building AI Features Users Want ... User research for AI product decisions

- Prompt Engineering for Developers ... Systematic prompt design

Related Guides

- Vector Databases: When to Build vs Buy ... Choosing the right vector store for your RAG system

- LLM Cost Optimization at Scale ... Advanced cost management for AI features